Measuring and examining TLS 1.3, IPv4, and IPv6 performance

Earlier in the year we enabled Transport Layer Security (TLS) 1.3 on the Fastly point of presence (POPs) for GOV.UK. Fastly have been gradually rolling out a whole set of improvements to the cache nodes in their POPs. This includes a new h2o TLS architecture, which was required for enabling TLS 1.3. I believe with this upgrade it also paves the way for enabling HTTP/3 & QUIC in the future, which should improve performance on connections that suffer from high packet loss (e.g. unstable, rural connections). A win-win all round! So assuming you are using a modern browser (and aren’t stuck behind a proxy that forces a TLS downgrade), you should see something like this when you next visit GOV.UK:

TLS 1.3 is the latest version of the Transport Layer Security cryptographic protocol, and it offers a number of improvements over previous versions, including:

- improved security by removing insecure or less secure ciphers (as well as insecure features)

- improved performance due to a reduction in the number of round trips (RTT) to establish a secure connection

Improved security and web performance, sign me up! So when TLS 1.3 was enabled on the UK POPs for GOV.UK on 15th April, I was a little disappointed to not see anything reflected in our synthetic web performance tests in SpeedCurve, as I’d expected to see a change in the Time to First Byte (TTFB) metric. I thought it may be related to TLS session resumption, but WebPageTest gives details about this in a pages HTML ‘Raw Details’ tab, and they are all "tls_resumed": "False". But these results are only from synthetic testing on a limited set of devices and connections, so that’s probably why. I’d guess if we were capturing Real User Monitoring (RUM) data over the same time period, we would have most likely have spotted it. So after reading Chendo’s blog post TLS 1.3 performance compared to TLS 1.2, it got me thinking: how could I replicate these tests for GOV.UK and verify (purely for my own sake) that TLS 1.3 does in fact offer a web performance improvement for our users. And does IPv4 vs IPv6 have any effect on the results (IPv6 is enabled for GOV.UK). The rest of this blog post goes into how I did this, what was involved and what were the results.

Ways of measuring connection time

The first step in this process is being able to collect data about the connection timings. There are a few ways of measuring the time taken for TCP connections to be established. Here are a few I looked into:

Time Command

The time command is what Chendo used in the blog post I referred to earlier. The command isn’t specific to timing network activity. In fact it can be used to time any command used on the command line. It’s incredibly simple to use, just prepend time to the command you wish to time:

time npm -version

# output: 0.16s user 0.04s system 109% cpu 0.186 total

The above is the output from Zsh: 0.16s CPU time was spent in user mode. 0.04s spent in kernel mode. The task got 109% of the CPU time, and took a total of 0.186 seconds to run. There’s a whole heap of customisations possible which can be seen on the commands man-page.

cURL

curl is used in command lines or scripts to transfer data. It’s open source and pretty much used everywhere, from cars to mobile phones. The number of options available on its man-page is huge. If you need to transfer data with URLs, then cURL is the tool for you. Thankfully we will only need a very small number of settings: those related to connection timing. Joseph Scott wrote an excellent blog post back in 2011 about how to extract detailed connection timing information using cURL, which can then be used to find things like the TTFB or TLS negotiation time. Joseph has even created a very simple shortcut called curlt that outputs the timing information and response headers for a particular connection.

HTTPStat

httpstat is essentially a visualisation plugin built upon curl. It takes the curl connection timing statistics and visualises them in an easy to digest format. Very simple to install and also outputs all the response headers too, so a very handy tool for basic debugging.

One of the advantages of using cURL on its own is that you have fine-grained control of the output from the command. So for example, if you want to write a large number of results to a file as JSON or a CSV, then this is entirely possible. Given this option, this is the route I decided to take when measuring TLS 1.3 performance.

Testing performance

So there were a few specific points I wanted to cover in testing:

- what’s the impact of the distance from a user to the server when it comes to latency and TLS negotiation time

- semi-automate a way to collect a ‘large’ amount of data related to connection metrics

- could I spot any difference in connection metrics when using IPv6 or IPv4

The last point is only really because my ISP has IPv6 available (and is enabled), as does GOV.UK. So I was just curious if anything could be seen in the resulting data when using IPv6 vs IPv4. Does IPv6 offer web performance advantages? There are a number of posts out there that compare performance. Some seem to suggest there is, others not.

Collecting results

As mentioned before I’m using cURL to capture detailed timing information. I captured a set of data which could then be plotted on a graph for each of the connection scenarios. So lets go through how this is done step by step.

Capture a single request / response

Using the method Joseph mentions in his blog post, it is very simple to capture detailed timing information from a URL. Once the curl-format.txt file has been created, run the following command:

curl -w "@curl-format.txt" -o /dev/null -s https://www.gov.uk

The output gives the response headers, as well as the detailed timing information that looks like this:

{

"time_namelookup": 0.045055,

"time_connect": 0.058824,

"time_appconnect": 0.107569,

"time_pretransfer": 0.109468,

"time_redirect": 0.000000,

"time_starttransfer": 0.127577,

"time_total": 0.130754

}

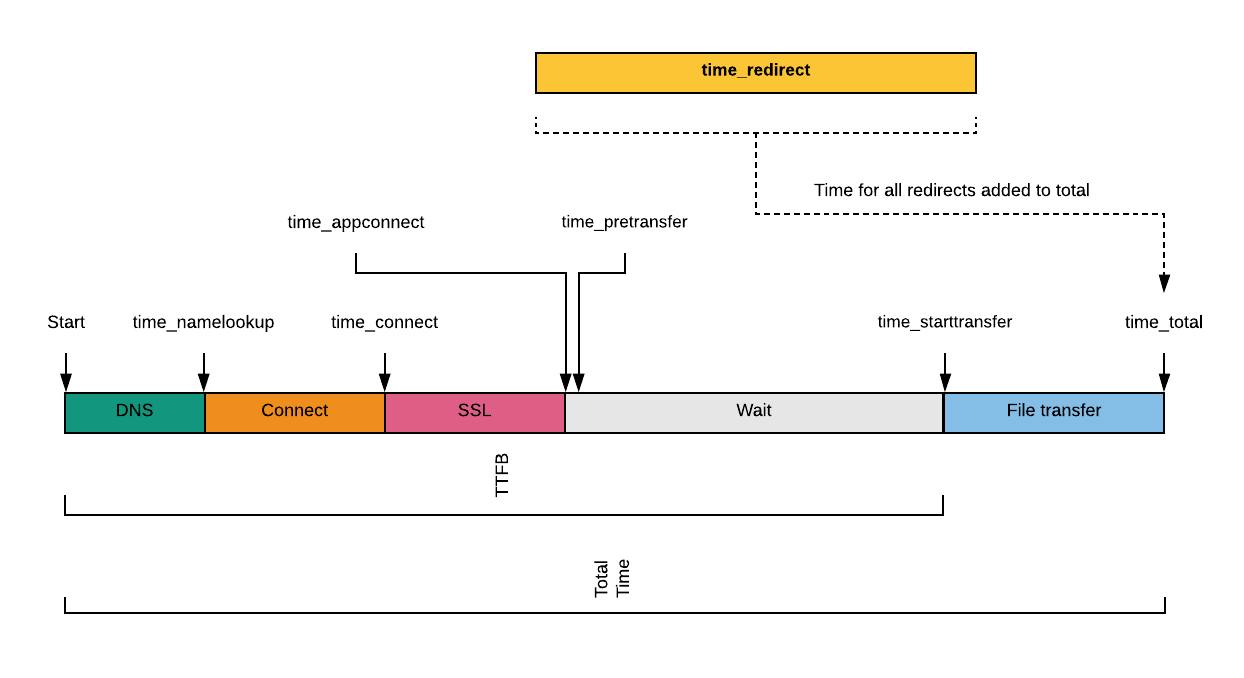

What do each of these values mean? They are detailed on the cURL man-page, but I’ve also pulled together a quick visualisation to help you understand it too:

It’s important to remember that many of these metrics are relative to the start of the connection, not the end of the previous activity. This is solved with a little simple data manipulation in the spreadsheets (see results for more info on this).

Capture multiple request/responses over time

Now that we can capture a single response from a URL, what about if we wanted to capture timing information over a period of time? This is where a simple bit of shell script comes in handy. Note that the curl_format has been moved into the shell script for ease.

#!/bin/bash

# create `timing-data.sh` and make it executable (chmod +x timing-data.sh)

curl_format='{

"time_dns": %{time_namelookup},

"time_connect": %{time_connect},

"time_appconnect": %{time_appconnect},

"time_pretransfer": %{time_pretransfer},

"time_ttfb": %{time_starttransfer},

"time_total": %{time_total}

}'

# used as headers for the CSV file

echo '["DNS","Connect","SSL","Wait","TTFB","Total Time"]'

total=521

for ((n=0;n<total;n++))

do

curl -w "$curl_format" -k --compressed -s -o /dev/null "$@"

sleep 0.3 #space the timings out slightly

done

The above script is used like so:

timing-data.sh https://www.gov.uk --tls-max 1.2

The script goes off and captures a large number of timing results (in this case 521). I’ve omitted time_redirect from the above script as I know this will always be 0 in my case, but you could leave it in if you are using on a URL with redirects. The above test took approximately 10 minutes to run. Here I’m capping the TLS version to 1.2 (--tls-max 1.2), as this allows us to compare different versions. Without this cap, cURL would use the highest version supported by the server, which is 1.3 for www.gov.uk. So the script above works, but it isn’t capturing the results to a file, or converting it to a format that can be easily imported into a spreadsheet and plotted. It comes out as one huge string of timing info in a JSON object. Time to bring in jq.

jq is a Swiss army knife for manipulating JSON data on the command line. You can easily transform JSON data into any structure you need:

timing-data.sh https://www.gov.uk --tls-max 1.2 | jq -r '[.[]] | @csv'

Here I’m taking the JSON output from the cURL response and piping it into jq. I’m then constructing an array made up of each element in the input (the timing values). Then lastly I’m piping the array into the @csv formatter to output the values as a CSV. Once converted to a CSV, the data can be easily imported into a spreadsheet (e.g. Google Sheets) for further manipulation and graphing.

Lastly, lets make sure we write all this manipulated data to a file so we can actually use it. A final bit of shell script can do this for us:

# final script

timing-data.sh https://www.gov.uk --tls-max 1.2 | jq -r '[.[]] | @csv' > output-test-results.csv

Here we redirect the output to a file. The file is created automatically if it doesn’t exist.

Capture multiple locations

The script is now capturing a large amount of connection timing data from a URL, but we have no control over the server cURL is looking at to get this data from. As mentioned above, I’d like to see how a servers geographic location (CDN POP) effects TLS and connection negotiation speed. Thankfully cURL comes with a useful --resolve option that allows us to do this:

# final script

timing-data.sh https://www.gov.uk --resolve www.gov.uk:443:[another_edge_node_ip] --tls-max 1.2 | jq -r '[.[]] | @csv' > output-test-results.csv

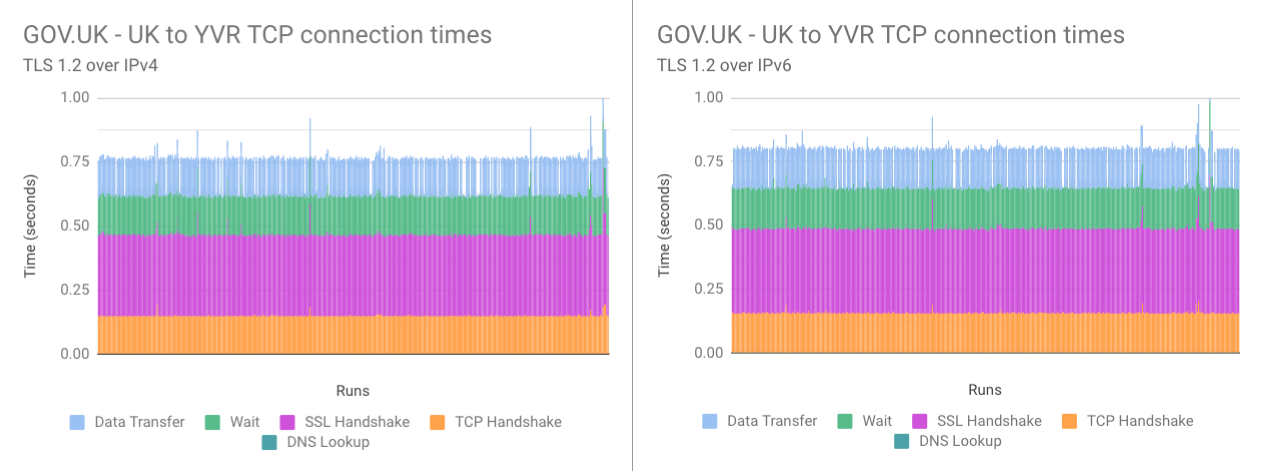

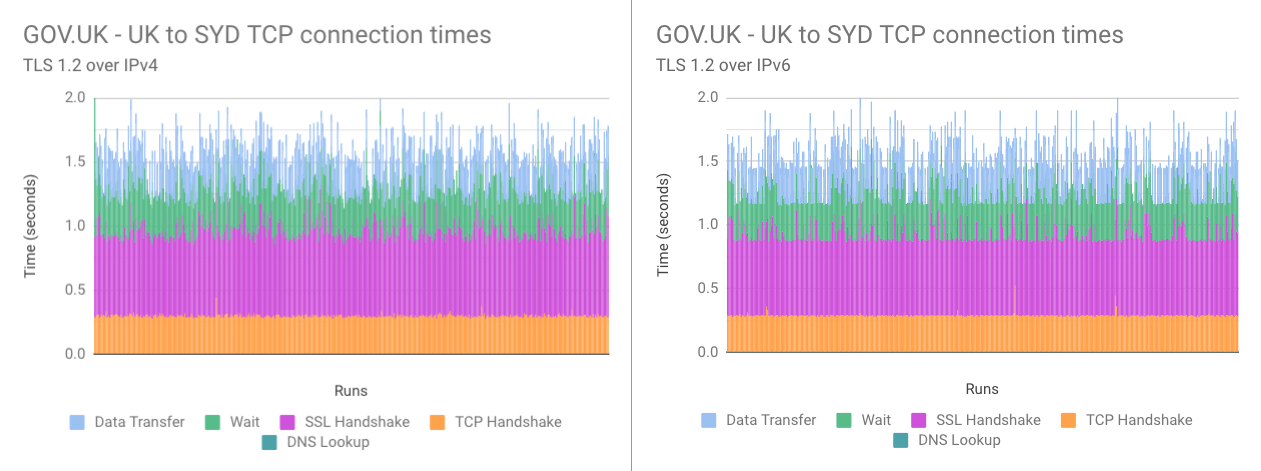

Here we are asking cURL to resolve any secure requests for www.gov.uk to a different IP address. So instead of allowing the CDN to choose the closest geographic location for our request (most likely London for me), we can tell it to request it from elsewhere. For example Sydney, or Vancouver. Why those locations? Well they are 10,585 and 4,663 miles away respectively. Therefore the latency from these locations will be much higher e.g. higher RTT. So it will be much easier to see the difference between TLS 1.2 and 1.3 (and indeed IPv4 and IPv6 if any can be seen) due to the greater distance to travel.

Of course this is all very contrived, as the very purpose of using a CDN is to minimise the latency between a user and the server, thus giving better web performance. To do this CDN’s use technologies like Anycast, Unicast, Broadcast, and Multicast. The details of which I won’t go into here (because I don’t fully understand them myself!) But this --resolve method is a great way to test the TLS negotiation improvements in 1.3.

So now we have a method of capturing a large amount of connection timing data from an origin, and we can store it in a format that is easily imported into a graphing tool. For graphing I’m using Google Sheets, as it has lots of options available and is easily sharable.

Testing

In terms of testing I chose three of Fastly’s POPs. UK (London), Canada (Vancouver - YVR), Australia (Sydney - SYD). London to my home is obviously very close (short distance), my home to Vancouver is 4,600 miles (medium distance), and my home to Sydney is 10,500 miles (long distance). For each one I would request the GOV.UK homepage, but force the use of a particular POP. I also forced the use of either IPv4 or IPv6 with the --resolve option. Both the IPv4 and the IPv6 tests were run concurrently for a particular TLS version, so as to keep the network conditions consistent. Each cURL command was run 521 times, with a 0.3 second delay between each run (so as to elongate the testing period). Each test took approximately 10 - 15 minutes to complete, with no other significant activity happening over my home network during the runs (so as to minimise last-mile interference).

NOTE: For those wanting to know how IPv4 or IPv6 is chosen in practical terms, there’s a whole RFC called ‘Happy Eyeballs Version 2’ dedicated to it. TL;DR: A DNS server provides an A record (IPv4) and an AAAA record (IPv6) for the particular URL you requesting. These are sent at almost the same time. Of the two DNS queries, the one that completes first is chosen to be the protocol that is used for the connection. But it’s not quite that simple though, as IPv6 is given two advantages:

- the IPv6 AAAA record is sent first before the A record

- if the IPv4 A record is received by the client first, there will be a recommended wait of 50ms to allow the AAAA record to complete

This is essentially trying to migrate internet traffic towards IPv6 (assuming it is available on the clients connection and at the server).

Results

Before I get into the results let me provide you with links so you can interpret them for yourself should you wish to:

- IPv4 TLS 1.2 vs IPv4 TLS 1.3 summary

- IPv6 TLS 1.2 vs IPv6 TLS 1.3 summary

- IPv4 TLS 1.2 vs IPv6 TLS 1.2 summary

- IPv4 TLS 1.3 vs IPv6 TLS 1.3 summary

Both the data and larger versions of the graphs can be found in other sheets found on those links. I’ve also compiled the timing difference between TLS versions and protocol versions at the different percentiles, both for the TLS negotiation and the total time (right of the graphs).

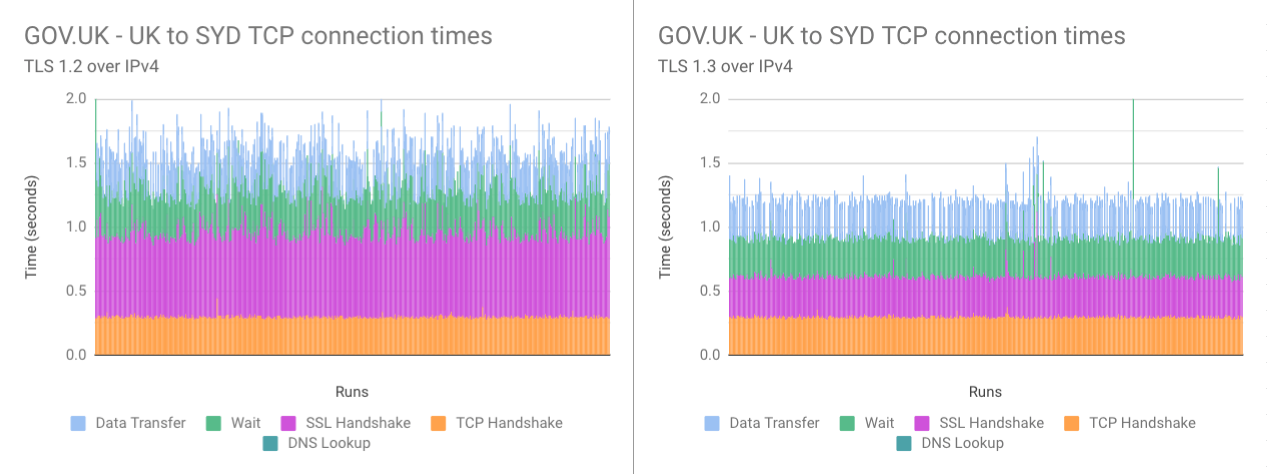

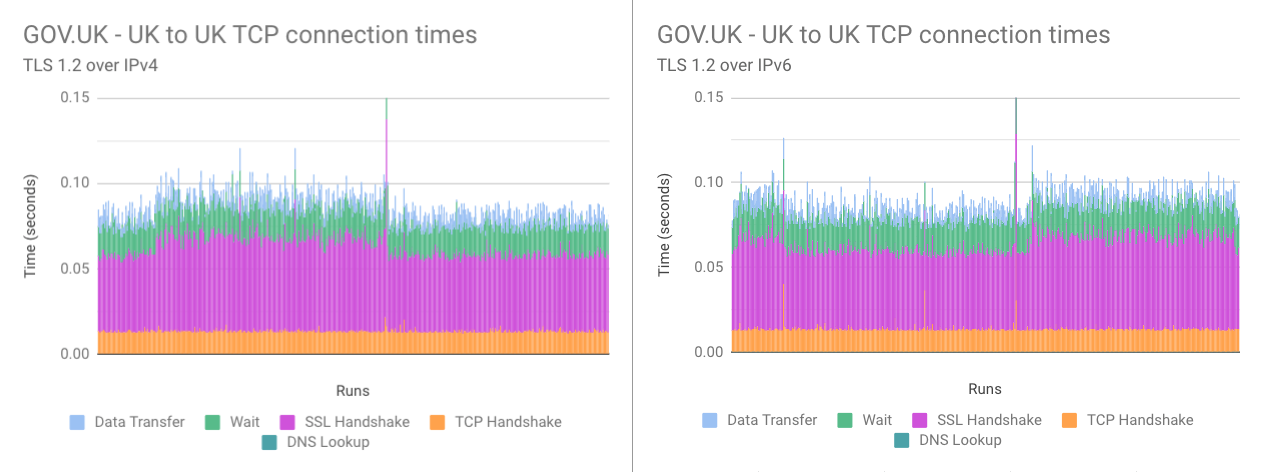

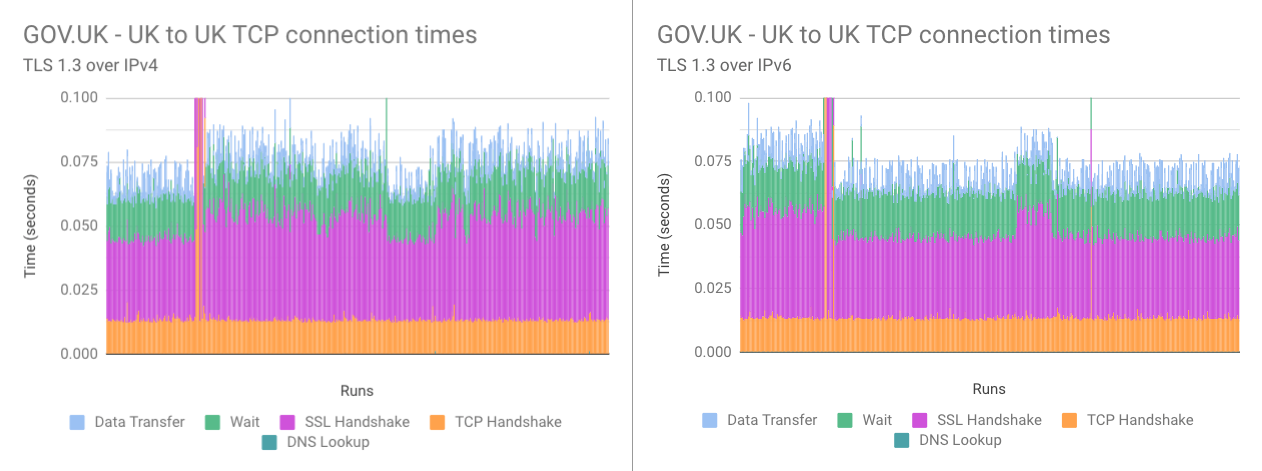

TLS 1.2 vs TLS 1.3

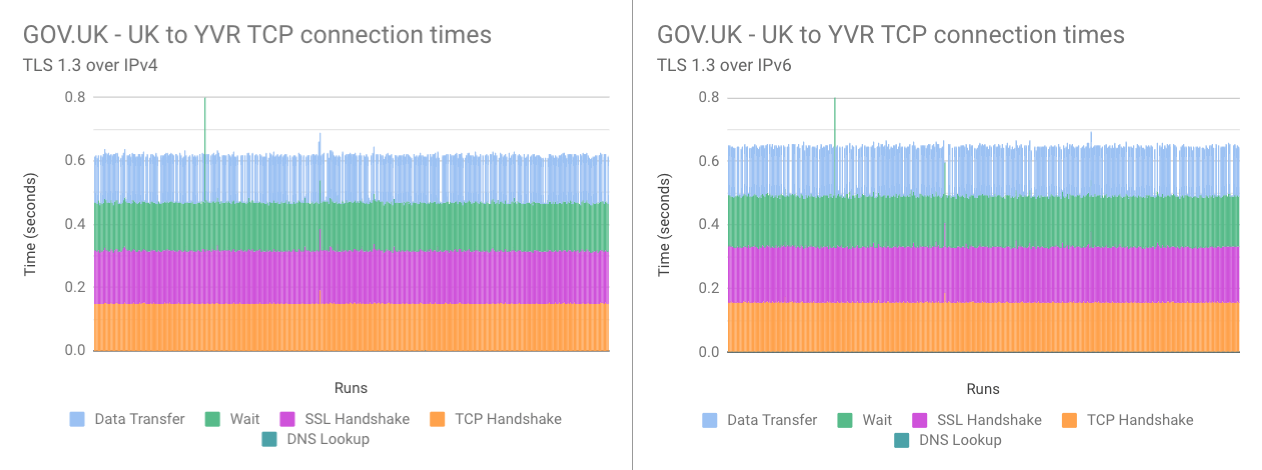

This is an easy one to call. TLS 1.3 beats 1.2 in all scenarios, by a fairly substantial set of percentages too. Usually between 20-50% improvement at the median TLS negotiation time for both IPv4 and IPv6. It is very easy to see this by looking at the graphs. The purple ‘SSL handshake’ is always a lot thinner with TLS 1.3, meaning it took less time to negotiate the secure connection. This in turn leads onto a significant total time reduction in nearly all results. Usually a reduction between 10-30%. Not a bad saving considering all you need to do is “enable it”!

Now this isn’t ground breaking news, as it is completely expected. This fact is touted as one of the advantages of enabling TLS 1.3. But I’m very glad to be able to verify that this is the case for GOV.UK. So users with a browser that supports TLS 1.3 should see a significant improvement in TTFB under many circumstances.

But wait, there’s more! TLS 1.3 also supports something called 0-RTT, or Zero Round Trip Time Resumption. This basically allows a client that has visited a website previously, to pick up where they left off. No need for a new TLS negotiation, one was established before, so use that. When in use I’d expect the purple in the graphs above to disappear. That means all the time needed to establish a secure connection and start sending data will be removed. That’s a pretty impressive web performance boost (when it eventually becomes better supported).

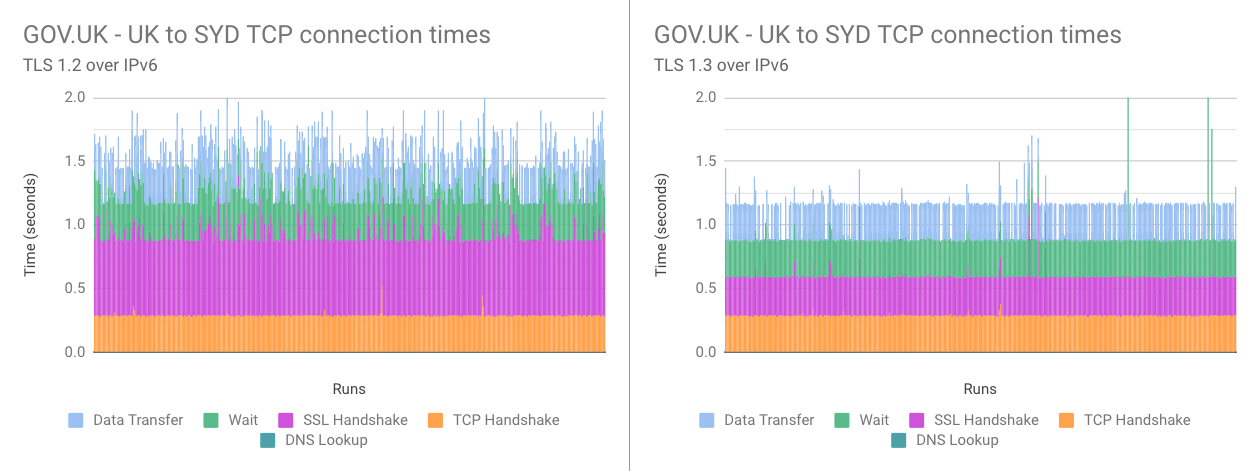

IPv4 vs IPv6

TLS 1.3 is quicker than 1.2, that is plain to see. But IPv4 vs IPv6 isn’t as clear cut. As mentioned, both IPv4 and IPv6 tests were run at the same time so as the traffic at the time was consistent.

Comparing TLS 1.2 over IPv4 and IPv6 (negotiation speed, total time):

- UK to UK, IPv6 was marginally slower (5%, 3% at median)

- UK to Vancouver, IPv6 was marginally slower (5%, 5% at median)

- UK to Sydney, IPv6 was marginally quicker (7%, 4% at median)

Comparing TLS 1.3 over IPv4 and IPv6 (negotiation speed, total time):

- UK to UK, IPv6 was significantly quicker (20%, 4% at median)

- UK to Vancouver, IPv6 was marginally slower (4%, 5% at median)

- UK to Sydney, IPv6 was marginally quicker (4%, 2% at median)

So what’s going on here? Well, in all honesty the sample size of.. just me, isn’t going to answer this question. It’s important to understand that with IPv4 and IPv6, you essentially have two independent connections to the internet. So you have two different paths from client to server. Although the tests were run at the same time, the path that each was running over are separate. Meaning that each path:

- may have different bandwidth available

- may have more congestion than the other

- may have more packet dropouts than the other

These are just a few considerations. Avery Pennarun explains it very well in his excellent ‘IPv4, IPv6, and a sudden change in attitude’ blog post. So it was an interesting experiment (for me), but I wouldn’t rely on the accuracy of the IPv4 vs IPv6 results. They were accurate for my connection at the point in time I measured them, but in no way are they conclusive. It’s also worth remembering that IPv6 adoption is still considerably low considering it became a IETF Draft Standard back in December 1998. There are also huge swathes of the world that simply haven’t adopted it yet. I guess now that globally we have run out of IPv4 addresses, there should be some pressure to move adoption forwards.

Conclusion

So in this blog post I’ve covered a range of topics, from how to collect data using curl and converting this into a format that can be plotted (CSV), all the way to IPv4 vs IPv6. Results show that enabling TLS 1.3 is a good idea. It offers more security and better performance for your users. It’s also worth noting that TLS 1.3 will be a requirement to use the QUIC transport layer network protocol in the future. This will pave the way to HTTP/3. And once 0-RTT becomes more prevalent, for repeat website visits the purple on the graphs displayed above will disappear completely. Even faster connections for all (at least for those that use a browser that supports it anyway).

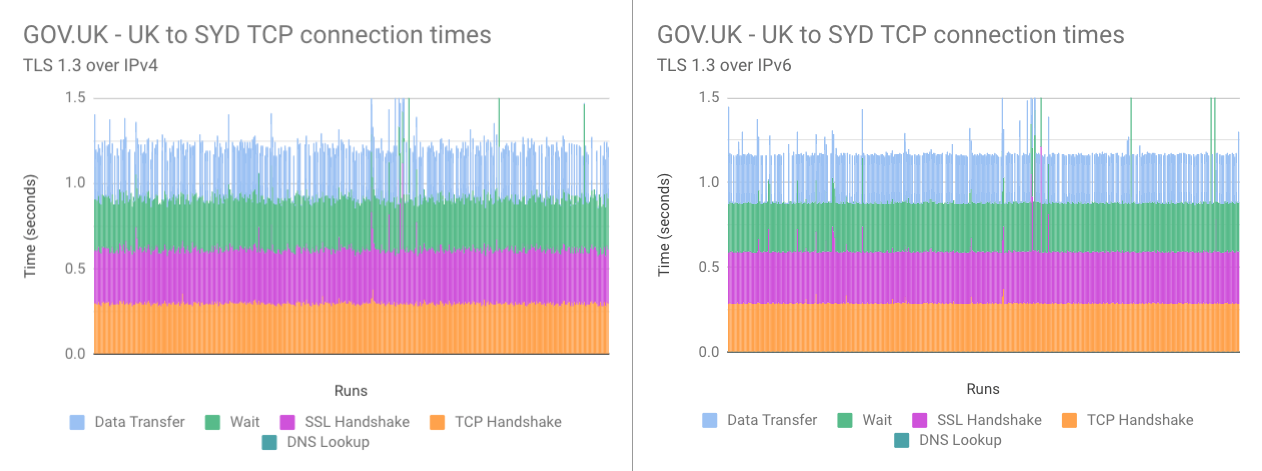

Update: Using Google Analytics server connection time

After publishing this article I got chatting to Rockey Nebhwani who suggested looking at Google Analytics (GA) data to see if I could spot the change. I always tend to forget about the performance timing data that GA captures. It has some limitations (sample size being unfixable and its use of averages), but if you have no other data sources then it’s better than nothing! Turns out it’s very easy to spot where the change occurred when looking over 5 months of data:

Admittedly it’s not dropped a huge amount, only 10ms or so. But considering this is the average time of heavily sampled data (14,302,686 page views sent sample data over 5 months), it shows that users are seeing an improvement. For those interested in how you find this data in GA: Behavior > Site Speed > Overview > Avg. Server Connection Time.

Post changelog:

- 30/07/20: Initial post published. Thanks go out to Joseph Scott, Robin Marx, Chendo, Lucas Pardue, Andy Davies, Barry Pollard, and Radu Micu for pointing me to a tonne of resources and answering lots of questions for this post.

- 30/07/20: Updated TLS 1.3 advantages to mention insecure ciphers have been removed in 1.3, rather than new more secure ones added (Thanks Jamie H).

- 05/08/20: Added graph of GA data (Avg. Server Connection Time) that shows the when TLSv1.3 was enabled (Thanks Rockey Nebhwani).