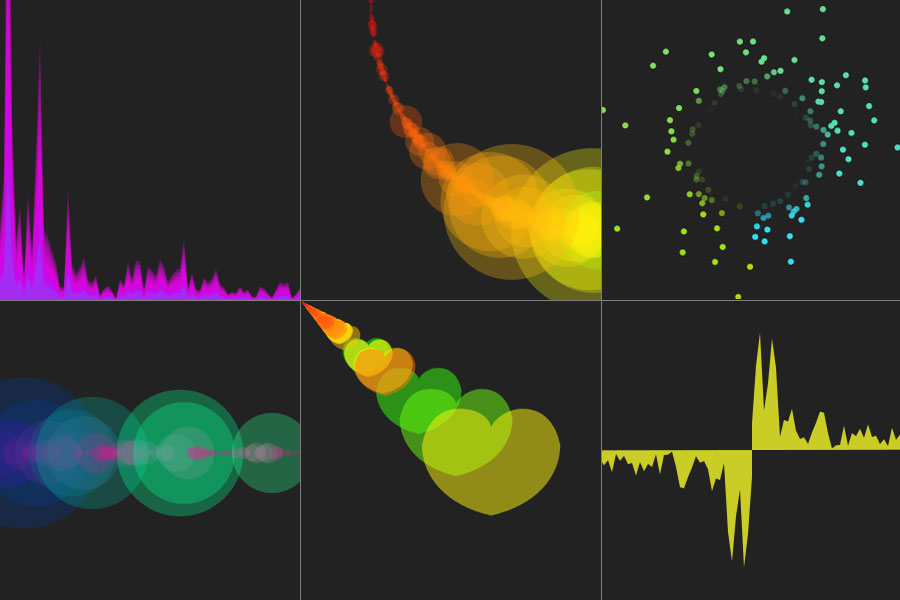

Audio Visualisation using HTML5 and Canvas

I’ve been interested in audio visualisations for some time, I first encountered them when I started using Sonique as my default audio player many years ago. I then started using the superb Milkdrop and G-Force plug-ins for Winamp, which are both in a league of their own for audio visualisation. So I jumped at the chance to create my own when I saw the Audio Data API on the Mozilla wiki.

The Audio Data API is a draft extension specification that allows developers to read and write raw audio data. As it’s only a draft specification I’ve had to use a custom build of Firefox 3.7 with the extension hacked into it (which you can download from here). The visualisations rely on a custom browser event displayed here:

1

<audio src="audio/Abigail.ogg" id="player" controls="true" onaudiowritten="visualisation.audioWritten(event);"></audio>

The onaudiowritten event fires when audio is played, the raw data is passed to a pre-calculated FFT (Fast Fourier Transform) from which you can start generating your visualisations; it is then written to the audio layer for users to hear.

You can view a demo of my visualisations, or for those of you who don’t have the custom version of Firefox installed I’ve created a short video of them in action. Each visualisation lasts approximately 10 seconds before changing. The music is courtesy of a friends band called ‘Good Dangers‘.

Nooshu HTML5 Visualisations from Matt Hobbs on Vimeo.

I hope the Audio Data API is approved and other browsers implement it, as it’s an exciting use of browser technology and definitely an area I’ll be keeping an eye on.